2019 JSALT Plenary Talks

2019 Sixth Frederick Jelinek Memorial Summer Workshop

Click the video below for a playlist of presentations from this year’s workshop:

Wed. June 26th, 10:30 AM to 11:45 AM

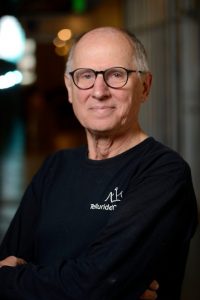

John Hansen (University of Texas at Dallas, USA): Robust Speaker Diarization and Recognition in Naturalistic Data Streams: One Small Step (Challenges for Multi-Speaker & Learning Spaces)

Abstract: Speech Technology has advanced significantly beyond general speech recognition for voice command and telephone applications. Today, the emergence of many voice-enabled speech systems have required the need for more effective voice capture and automatic speech and speaker recognition. The ability to employ speech and language technology to assess human-to-human interactions is opening up new research paradigms which can have a profound impact on assessing human interaction including personal communication traits and contribute to improving the quality of life and educational experience of individuals. In this talk, we will explore recent research trends on automatic audio diarization and speaker recognition for audio streams which include multi-tracks, speakers, and environments. Specifically, we will consider (i) Prof-Life-Log corpus (daily audio logging over multiple years), (ii) Education based child & student-based Peer-Lead Team Learning (PLTL), and (iii) NASA Apollo-11 massive multi-track audio processing (19,000hrs of data). These domains will be discussed in terms of algorithmic advancements, as well as directions for continued research.

Bio: John H.L. Hansen, received the Ph.D. & M.S. degrees from Georgia Institute of Technology, and B.S.E.E. degree from Rutgers Univ., N.J. He joined Univ. of Texas at Dallas (UTDallas), Erik Jonsson School of Engineering & Computer Science in 2005, where he is Associate Dean for Research, Professor of Electrical & Computer Engineering, Distinguished Univ. Chair in Telecommunications Engineering, and holds a joint appointment in the School of Behavioral & Brain Sciences (Speech & Hearing). At UTDallas, he established The Center for Robust Speech Systems (CRSS). He is an ISCA Fellow, IEEE Fellow, past Member and TC Chair of IEEE Signal Processing Society Speech & Language Processing Technical Committee (SLTC), ISCA Distinguished Lecturer, and Technical Advisor to the U.S. Delegate for NATO (IST/TG-01). He currently serves as ISCA President. He has supervised 88 PhD/MS thesis candidates, was the recipient of 2005 Univ. of Colorado Teacher Recognition Award, and author/co-author of 735 journal/conference papers, including 11 books in the field of speech processing and language technology. He served as General Chair and Organizer for Interspeech-2002, Co-Organizer and Technical Program Chair for IEEE ICASSP-2010, and Co-General Chair and Organizer for IEEE Workshop on Spoken Language Technology (SLT-2014) (Lake Tahoe, NV).

Location: A-1150

Wed. July 3rd, 10:30 AM to 11:45 AM

Hugo Larochelle (Google AI & Université de Montréal & MILA, Canada): Learning to generalize from few examples with meta-learning

Abstract: A lot of the recent progress on many AI tasks were enabled in part by the availability of large quantities of labeled data. Yet, humans are able to learn concepts from as little as a handful of examples. Meta-learning has been a very promising framework for addressing the problem of generalizing from small amounts of data, known as few-shot learning. In this talk, I’ll present an overview of the recent research that has made exciting progress on this topic. I will also share my thoughts on the challenges and research opportunities that remain in few-shot learning, including a proposal for a new benchmark.

Bio: Hugo Larochelle is a Research Scientist at Google Brain and lead of the Montreal Google Brain team. He is also a member of Yoshua Bengio’s Mila and an Adjunct Professor at the Université de Montréal. Previously, he was an Associate Professor at the University of Sherbrooke. Larochelle also co-founded Whetlab, which was acquired in 2015 by Twitter, where he then worked as a Research Scientist in the Twitter Cortex group. From 2009 to 2011, he was also a member of the machine learning group at the University of Toronto, as a postdoctoral fellow under the supervision of Geoffrey Hinton. He obtained his Ph.D. at the Université de Montréal, under the supervision of Yoshua Bengio. Finally, he has a popular online course on deep learning and neural networks, freely accessible on YouTube.

Location: A-1600

Wed. July 10th, 10:30 AM to 11:45 AM

Lori Levin (Carnegie Mellon University, USA): What the bleep is a morphosyntactic strategy?

Abstract: The field of language technologies has had a tumultuous relationship with the field of linguistics. Linguistic theory is too abstract and you have to study it for a long time to see how it can help. On the other hand, the field of language technologies still relies on annotated data and on linguistic output representations such as dependency trees, parts of speech, or morphological tags. This talk is about something that will help you: the concept of morphosyntactic strategy. It is (mostly) not theoretical. It will demystify languages you don’t speak and help you design input representations and techniques that work for more languages and do meaningful error analysis. This talk will be partly educational (what is a morphosyntactic strategy), partly positional (where is the concept of morphosyntactic strategy missing in the field of language technologies and where it can help) and partly research oriented (a study on detecting the concept of causality in English text). I will relate the concept of morphosyntactic strategy to the framework of Construction Grammar and the fascinating historical process of grammaticalization (how meanings become grammar).

Abstract: The field of language technologies has had a tumultuous relationship with the field of linguistics. Linguistic theory is too abstract and you have to study it for a long time to see how it can help. On the other hand, the field of language technologies still relies on annotated data and on linguistic output representations such as dependency trees, parts of speech, or morphological tags. This talk is about something that will help you: the concept of morphosyntactic strategy. It is (mostly) not theoretical. It will demystify languages you don’t speak and help you design input representations and techniques that work for more languages and do meaningful error analysis. This talk will be partly educational (what is a morphosyntactic strategy), partly positional (where is the concept of morphosyntactic strategy missing in the field of language technologies and where it can help) and partly research oriented (a study on detecting the concept of causality in English text). I will relate the concept of morphosyntactic strategy to the framework of Construction Grammar and the fascinating historical process of grammaticalization (how meanings become grammar).

Bio: Lori Levin is a linguist (BA UPenn 1979, Ph.D. MIT 1986) with over 35 years experience in NLP. She currently specializes in NLP in low-resource scenarios and has also specialized in grammar formalisms, lexicons, discourse annotation, and morphology. The first twenty years of her career were focused on multi-lingual meaning representations for rule-based MT.

Location: A-1600

Wed. July 17th, 11:00 AM – 12:00 PM

Yoshua Bengio (MILA, Canada): Deep Representation Learning

Abstract: How could humans or machines discover high-level abstract representations which are not directly specified in the data they observe? The original goal of deep learning is to enable learning of such representations in a way that disentangles underlying explanatory factors. Ideally, this would mean that high-level semantic factors could be decoded from top-level representations with simple predictors like a linear classifier, trainable from very few examples. However, there are too many ways of representing the same information, and it is thus necessary to provide additional clues to the learner, which can be thought about as priors. We highlight several such priors. One of those priors is that high-level factors measured at different times (or places) have high mutual information, i.e., can be predicted from each other and contain many bits of information. We present recent work in unsupervised representation learning towards maximizing the mutual information between random variables. Finally, we introduce the novel idea that good representations should be robust under changes in distribution and show that this can, in fact, be used in a meta-learning setup to identify causal variables and how they are causally related.

Bio: Yoshua Bengio is recognized as one of the world’s leading experts in artificial intelligence (AI) and a pioneer in deep learning.

Since 1993, he has been a professor in the Department of Computer Science and Operational Research at the Université de Montréal. Holder of the Canada Research Chair in Statistical Learning Algorithms, he is also the founder and scientific director of Mila, the Quebec Institute of Artificial Intelligence, which is the world’s largest university-based research group in deep learning.

His research contributions have been undeniable. In 2018, Yoshua Bengio collected the largest number of new citations in the world for a computer scientist thanks to his many publications. The following year, he earned the prestigious Killam Prize in computer science from the Canada Council for the Arts and was co-winner of the A.M. Turing Prize, which he received jointly with Geoffrey Hinton and Yann LeCun, as well as the Excellence Awards of the Fonds de recherche du Québec – Nature et technologies.

Concerned about the social impact of AI, he actively contributed to the development of the Montreal Declaration for the Responsible Development of Artificial Intelligence.

Location: A-1600

Thu. July 18th, 10:30 AM to 11:45 AM

Xuedong Huang (Microsoft, USA): Speech and LanguAge processing

Abstract: Deep learning algorithms, supported by the availability of powerful cloud computing infrastructure and massive training data, constitutes the most significant driving force in our AI evolution journey. In the past three years, Microsoft reached several historical AI milestones being the first to achieve human parity in the following public benchmark tasks that have been broadly used in the speech and language community: 2017 Speech Recognition on the conversational speech transcription task (Switchboard); 2018 Machine Translation on the Chinese to English news translation task (WMT17) 2019 Conversational QA on the Stanford conversational question and answering task (CoQA). New neural text-to-speech service also helps machines speak like people. These breakthroughs have a profound impact on numerous spoken language applications. Furthermore, they are enabling more complex applications such as meeting transcription that requires real-time, multi-person, far-field speech transcription and speaker attribution. In this talk, he will present an overview of our work in these spaces as well as discuss challenges and some technical details.

Abstract: Deep learning algorithms, supported by the availability of powerful cloud computing infrastructure and massive training data, constitutes the most significant driving force in our AI evolution journey. In the past three years, Microsoft reached several historical AI milestones being the first to achieve human parity in the following public benchmark tasks that have been broadly used in the speech and language community: 2017 Speech Recognition on the conversational speech transcription task (Switchboard); 2018 Machine Translation on the Chinese to English news translation task (WMT17) 2019 Conversational QA on the Stanford conversational question and answering task (CoQA). New neural text-to-speech service also helps machines speak like people. These breakthroughs have a profound impact on numerous spoken language applications. Furthermore, they are enabling more complex applications such as meeting transcription that requires real-time, multi-person, far-field speech transcription and speaker attribution. In this talk, he will present an overview of our work in these spaces as well as discuss challenges and some technical details.

Bio: Dr. Xuedong Huang is a Microsoft Technical Fellow in Microsoft Cloud and AI. He leads Microsoft’s Speech and Language Group. In 1993, Huang joined Microsoft to found the company’s speech technology group. As the general manager of Microsoft’s spoken language efforts for over a decade, he helped to bring speech recognition to the mass market by introducing SAPI to Windows in 1995 and Speech Server to the enterprise call center in 2004. Before Microsoft, he was on the faculty at Carnegie Mellon University. He received Alan Newell research excellence leadership medal in 1992 and IEEE Best Paper Award in 1993. He is an IEEE & ACM fellow. He was named as the Asian American Engineer of the Year (2011), Wired Magazine’s 25 Geniuses Who Are Creating the Future of Business (2016), and AI World’s Top 10 (2017). He holds over 100 patents and published over 100 papers & 2 books.

Location: A-1600

Wed. July 24th, 10:30 AM to 11:45 AM

Nima Mesgarani (Columbia University, USA): Speech processing in the human brain meets deep learning

Abstract: Speech processing technologies have seen tremendous progress since the advent of deep learning, where the most challenging problems no longer seem out of reach. In parallel, deep learning has advanced the state-of-the-art in processing the neural signals to speech in the human brain. This talk reports progress in three important areas of research: I) Decoding (reconstructing) speech from the human auditory cortex to establish a direct interface with the brain. Such an interface not only can restore communication for paralyzed patients but also has the potential to transform human-computer interaction technologies, II) Auditory Attention Decoding, which aims to create a mind-controlled hearing aid that can track the brain-waves of a listener to identify and amplify the voice of the attended speaker in a crowd. Such a device could help hearing-impaired listeners communicate more effortlessly with others in noisy environments, and III) More accurate models of the transformations that the brain applies to speech at different stages of the human auditory pathway. This is achieved by training deep neural networks to learn the mapping from sound to the neural responses. Using a novel method to study the exact function learned by these neural networks has led to new insights on how the human brain processes speech. On the other hand, these new insights motivate distinct computational properties that can be incorporated into the neural network models to better capture the properties of speech processing in the human auditory cortex.

Bio: Nima Mesgarani is an associate professor at the Electrical Engineering Department and Mind-Brain-Behavior Institute of Columbia University in the City of New York. He received his Ph.D. from the University of Maryland and was a postdoctoral scholar in Center for Language and Speech Processing at Johns Hopkins University and the Neurosurgery Department of University of California San Francisco. He has been named a Pew Scholar for Innovative Biomedical Research and has received several distinctions including the National Science Foundation Early Career Award and Kavli Institute for Brain Science Award. His interdisciplinary research combines theoretical and experimental techniques to model the neural mechanisms involved in human speech communication which critically impacts research in modeling speech processing and speech brain-computer interface technologies.

Location: A-1600

Wed. July 31st, 10:30 AM to 11:45 AM

Hynek Hermansky (Johns Hopkins University, USA): Machines: If You Can’t Beat Them, Join Them

Abstract: The engineering trends, which advocates the study of hearing knowledge and its use in speech technology are currently being ridiculed by recent advances in big-data machine learning (ML). However, not all is lost for those who believe with Roman Jakobson that “We speak in order to be heard….”. We argue that it is possible and perhaps even desirable to join the ML crowd in order to study hearing. Speech evolved to be heard and therefore properties of hearing are imprinted on speech. Subsequently, properly structured ML-based speech technology often yield human-like processing strategies, which indicate the particular hearing properties that are being employed in the processing of speech. As an example, our research supports spectral dynamics based speech processing in multiple parallel streams, which leads to efficient strategies for adaptation to new situations.

Bio: Hynek Hermansky (LF’17, F’01, SM’92. M’83, SM’78) received the Dr. Eng. Degree from the University of Tokyo, and Dipl. Ing. Degree from the Brno University of Technology, Czech Republic. He is the Julian S. Smith Professor of Electrical Engineering and the Director of the Center for Language and Speech Processing at the Johns Hopkins University in Baltimore, Maryland. He is also a Professor at the Brno University of Technology, Czech Republic. He has been working in speech processing for over 30 years, previously as a Director of Research at the IDIAP Research Institute, Martigny and a Titular Professor at the Swiss Federal Institute of Technology in Lausanne, Switzerland, a Professor and Director of the Center for Information Processing at OHSU Portland, Oregon, a Senior Member of Research Staff at U S WEST Advanced Technologies in Boulder, Colorado, a Research Engineer at Panasonic Technologies in Santa Barbara, California, and a Research Fellow at the University of Tokyo. His main research interests are in acoustic processing for speech recognition.

Bio: Hynek Hermansky (LF’17, F’01, SM’92. M’83, SM’78) received the Dr. Eng. Degree from the University of Tokyo, and Dipl. Ing. Degree from the Brno University of Technology, Czech Republic. He is the Julian S. Smith Professor of Electrical Engineering and the Director of the Center for Language and Speech Processing at the Johns Hopkins University in Baltimore, Maryland. He is also a Professor at the Brno University of Technology, Czech Republic. He has been working in speech processing for over 30 years, previously as a Director of Research at the IDIAP Research Institute, Martigny and a Titular Professor at the Swiss Federal Institute of Technology in Lausanne, Switzerland, a Professor and Director of the Center for Information Processing at OHSU Portland, Oregon, a Senior Member of Research Staff at U S WEST Advanced Technologies in Boulder, Colorado, a Research Engineer at Panasonic Technologies in Santa Barbara, California, and a Research Fellow at the University of Tokyo. His main research interests are in acoustic processing for speech recognition.

He is a Life Fellow of IEEE, and a Fellow of the International Speech Communication Association (ISCA), He is the General Chair of the INTERSPEECH 2021, was the General Chair of the 2013 IEEE Automatic Speech Recognition and Understanding Workshop, was in charge of plenary sessions at the 2011 ICASSP in Prague, was the Technical Chair at the 1998 ICASSP in Seattle and an Associate Editor for IEEE Transaction on Speech and Audio. He is also a Member of the Editorial Board of Speech Communication, was twice an elected Member of the Board of ISCA, a Distinguished Lecturer for IEEE, a Distinguished Lecturer for ISCA, the recipient of the 2013 ISCA Medal for Scientific Achievement, and the recipient of the 2020 IEEE Flanagan Award.

Location: A-1600